Concepts for Understanding AI

This week introduces core concepts that support later discussions of symbolic AI, machine learning, and large language models. The goal is not to learn implementation details. Instead, the goal is to introduce a set of basic ideas that will help you make sense of how AI systems behave and how claims about AI are made in real workflows.

Some of these concepts originate from mathematics, statistics, or computer science. Understanding them at a technical level is not required for this course. I will provide optional advanced tutorials for students who are interested in going further. These materials are intended to support deeper exploration, not to set expectations for assessment. If you want a more detailed understanding of how AI systems operate under the hood, these concepts remain essential, but this course focuses on how they function within information systems and professional practice.

Data, Information, and Knowledge

In information work, the same artifact can be treated as data, information, or knowledge depending on how it is used.

Data refers to recorded values or signals that can be stored and processed. Data can be numeric, textual, visual, or behavioral. Data does not explain itself. It becomes meaningful when a system or a person interprets it in context.

Information is data that has been organized so it can address a particular question or need. A library catalog record can function as information when it helps you locate a book. A table can function as information when it supports comparison. Information depends on structure, context, and use.

Knowledge refers to an understanding that people rely on to support decisions or action. In practice, knowledge involves interpretation, judgment under uncertainty, and evaluation of evidence.

In AI systems, data often serves as input, and information is often what is presented to users. Systems may also store structured knowledge artifacts, such as knowledge graphs, that encode claims or relationships.

Hands on: Identifying data, information, and knowledge

Goal: Practice distinguishing data, information, and knowledge using concrete, familiar examples.

Instructions:

Below are several examples drawn from common information systems. For each example, think about whether it is best understood as data, information, or knowledge. Some examples may feel ambiguous. Focus on how the item is being used, not on finding a single correct label.

Examples

-

A CSV file listing book IDs and checkout timestamps exported from a library system.

-

A dashboard showing the top ten most borrowed books in the last month.

-

A librarian’s written recommendation to purchase more copies of a title because current copies have long waitlists.

-

A transcript of a recorded reference interview.

-

A short report stating that weekend staffing should be increased because user questions peak on Saturdays.

-

A log of user search queries collected by a discovery system.

-

A chart comparing average response time before and after a new chatbot was deployed.

-

A decision to keep the chatbot in place because it reduced response time without lowering answer quality.

How to use this activity

- Read through the examples and classify each one mentally as data, information, or knowledge.

- Notice which cases feel clear and which feel uncertain.

Think about

- Which examples were easiest to classify, and why.

- Which examples felt unclear or could fit more than one category.

- What kind of interpretation or judgment is needed to move from data to information, or from information to knowledge.

There is no single correct answer for every example. The purpose of this activity is to practice reasoning about how these terms are used in real information systems.

Further information:

- DIKW Pyramid: EBSCO article, Wikipedia page

Algorithms as Processing, Ranking, and Prediction

An algorithm is a procedure that transforms inputs into outputs through defined steps. In information systems, algorithms can support processing, ranking, and prediction.

Processing algorithms transform content into a usable representation. Examples include tokenization, OCR, deduplication, and entity extraction. These steps change what the system can see and act on. Common AI-related examples:

- OCR (optical character recognition) systems: convert scanned documents into searchable text;

- Named Entity Recognition (NER) models: identify people, organizations, or locations in news articles or legal documents.

- Embedding models: convert text or images into vectors for comparison.

Ranking algorithms order items for display. Search results, recommendations, and feed ordering are ranking outcomes. Ranking depends on what the system treats as relevant and what signals it uses. Common AI-related examples:

- Web search ranking algorithms: order pages based on relevance signals rather than publication order;

- Recommender system ranking components: sort candidate items before they are shown to users;

- Library or academic discovery system ranking: determine which records appear on the first page of results.

Prediction algorithms estimate what is likely to happen or what a user is likely to want. Examples include click prediction, spam likelihood, and estimated time of arrival. Prediction does not guarantee correctness. It provides a best estimate under uncertainty. Common AI-related examples:

- Spam detection models: estimate the likelihood that an email message is spam;

- Click-through rate prediction models: estimate the probability that a user will click on a result or recommendation;

- Travel or delivery time estimation systems: predict how long a trip or shipment is likely to take.

These categories overlap in practice. A single system can process content, rank candidates, and predict outcomes in one workflow. Common system-level examples:

- Search engines: process documents through text extraction and indexing, predict relevance to a query, and rank results for display;

- Recommender systems: process user behavior data, predict user interest in candidate items, and rank those items before presentation;

- Large language model applications: process input text, predict likely next tokens, and rank candidate outputs before generating a response.

Hands on: Comparing search results across search engines

Goal: Observe how different search engines produce different results for the same query, and relate those differences to processing, ranking, and prediction.

This activity is for observation and reflection only. You do not need to submit anything.

Instructions:

Choose one search query and use it on at least two different search engines. Examples of search engines include Google, Bing, DuckDuckGo.

Steps:

-

Choose one simple, neutral query. Examples include a book title, a public organization, a historical event, or a common how-to question.

-

Search for the exact same query on two or more search engines.

-

Look only at the first page of results on each platform.

-

Compare what you see across platforms.

What to observe:

- Are the results the same or different?

- Do you see differences in wording, summaries, or result formats?

- Is the order of results the same across platforms?

- Do some platforms show special elements such as featured answers, knowledge panels, or suggested follow-up questions?

Connecting observations to concepts:

As you compare results, think about which type of system function might best explain each difference.

- Differences in how content appears or is summarized may relate to processing.

- Differences in the order of results may relate to ranking.

- Differences that seem tailored to you, your location, or your prior activity may relate to prediction.

You are not expected to know how any system works internally. Focus only on what is visible to you as a user.

Think about:

- Which differences were easiest to notice?

- Which differences were harder to explain?

- How might these differences shape what users notice, trust, or choose to click?

Further information:

- Google Search documentation on ranking and results

- The history of Amazon's recommendation algorithm

- Algorithms are everywhere

Data Representation

AI systems depend on how data is represented. Representation is not only storage format. It is also the set of properties the system can use for processing and comparison.

Structured and unstructured data

Structured data follows a consistent schema. Examples include tables, relational databases, CSV files, and many metadata records stored in standardized formats. Structured formats support reliable querying, aggregation, validation, and constraints.

In AI-related tasks, structured data often appears as spreadsheets or CSV files used for training and evaluation, JSON files that store labeled examples or configuration settings, database tables used for logging user behavior, and metadata records encoded in formats such as MARC or Dublin Core.

Unstructured data does not follow a fixed schema. Examples include free text documents, PDFs, images, audio recordings, and video files. Unstructured data can still be organized, but organization often requires extra steps such as text extraction, segmentation, tagging, transcription, or embedding.

In AI workflows, unstructured data commonly includes news articles used for text classification, social media posts analyzed for sentiment, scanned documents processed with OCR, images used for object recognition, and audio files used for speech-to-text tasks.

Many information organization problems occur at the boundary between these categories. A PDF may contain text, tables, and images, but its structure may be unclear to a system. A JSON file may follow a schema, but its fields may vary across records. A collection of emails may contain valuable information, but headers, bodies, and attachments are often inconsistent or incomplete.

Features

A feature is a measurable attribute that a model uses when performing a specific task. In a library or information context, features might include publication year, subject terms, circulation history, or text-based signals extracted from documents.

Features are design choices. They determine which aspects of the data can influence a system’s output. If something is not represented as a feature, the system cannot account for it, even if it matters to users or professionals.

Selecting appropriate features can improve performance for a clearly defined task, such as ranking search results or predicting which items are likely to be used. However, feature choices also reflect assumptions about what matters for that task.

Some features may act as proxies for sensitive attributes. For example, a feature that reflects location or past behavior may indirectly correlate with socioeconomic background or resource access. When such relationships are not visible or understood, system outputs can appear opaque or unfair, reducing user trust.

Vectors

A vector is a list of numbers that represents an item. Vectors allow systems to compute similarity. Many modern AI workflows use vectors to compare texts, images, or users based on patterns rather than exact matches.

In practice, vectors support tasks like semantic search, clustering, and recommendation. A vector representation does not preserve all meaning. It preserves what the representation method captures as salient for comparison.

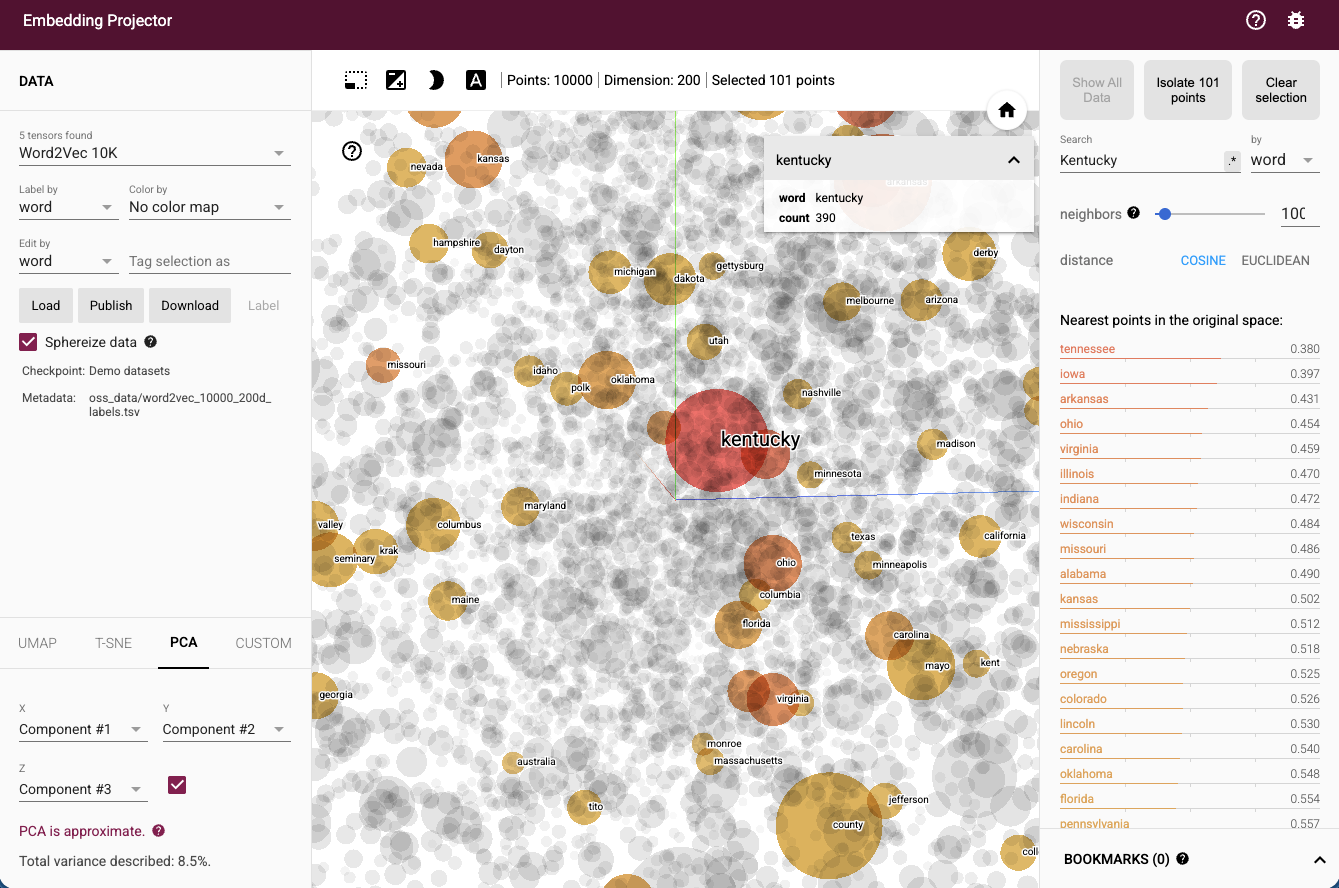

Hands on: Exploring vectors as similarity tools with no coding

Goal: See how vector representations support similarity and grouping.

Steps:

- Open the TensorFlow Embedding Projector.

-

Use the default dataset and search for a few familiar words. Examples include library, archive, health, music, privacy.

-

Click a word and observe which items appear nearby.

Think about

- What kinds of similarity the tool seems to capture.

- Which neighbors feel reasonable and which feel surprising.

- Why similarity can be useful even when it is not a definition of meaning.

Further information:

- IBM - Structured vs. unstructured data: What's the difference?

- IBM - What is feature selection?

- IBM - What is vector embedding?

Probability, Statistics, and AI Reasoning

It is common for AI systems to express uncertainty using numbers that appear precise. These numbers often describe likelihood, not certainty, and they should be interpreted with care.

For example, if a system reports that an email has a 90% probability of being spam, this does not mean the system is 90% confident in a single, human-like sense. It reflects the system’s estimate based on patterns in prior data.

If a recommender system predicts a 70% chance that a user will click an item, this does not imply that the user will click in 7 out of 10 future interactions. It means the system assigns a higher estimated likelihood than other options and often uses these estimates as inputs when ranking what to show.

Across these cases, probability values are best treated as estimates under uncertainty. Numbers can make uncertainty look objective or authoritative, but they remain shaped by data, assumptions, and context.

Hands on: Observing AI predictions in public forecasting markets

Goal: Observe how AI systems express predictions as probabilities in real-world forecasting platforms.

Instructions:

Visit a public forecasting platform where AI agents generate or contribute to predictions.

One example is: https://oraclemarkets.io/predictions

Steps:

-

Choose one prediction question shown on the platform. Examples may include questions about elections, markets, technology adoption, or major public events.

-

Note how the prediction is presented.

-

Read any description of how the prediction is generated.

-

Observe how the prediction may change over time.

Think about:

-

What does the probability claim mean in this context? Does it describe certainty about a single future event, or uncertainty based on current information?

-

What assumptions might the AI agent be relying on? Consider data sources, past patterns, or modeled scenarios.

-

How might users misinterpret this probability if it is treated as a guarantee?

-

How does seeing an AI agent make a prediction affect your trust in the output compared to a human expert or a market consensus?

Further information:

What to carry forward

This week provides four anchors for the rest of the course.

First, data, information, and knowledge are different levels of interpretation and accountability.

Second, algorithms can be analyzed by whether they process, rank, or predict.

Third, representation choices determine what a system can see and compare.

Fourth, uncertainty is a normal condition in AI outputs and should be interpreted rather than ignored.