A Brief History of AI

Over time, AI has evolved from symbolic approaches to data-driven learning and, most recently, to generative models, reflecting both changing views of intelligence and advances in computing technology.

1940s–1950: Foundations

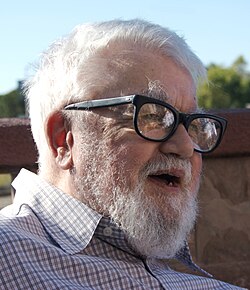

1936

Alan Turing publishes On Computable Numbers, introducing Turing machine as a formal computation model.

1950

Alan Turing publishes Computing Machinery and Intelligence.

Introduces the Imitation Game (Turing Test) as an evaluation criterion for machine intelligence.

Image source

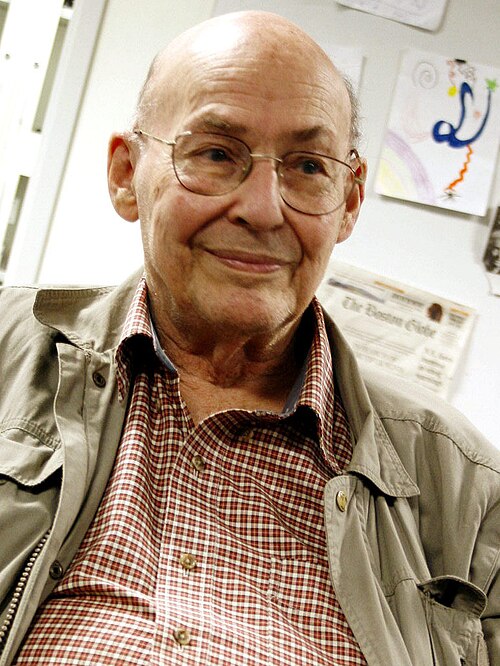

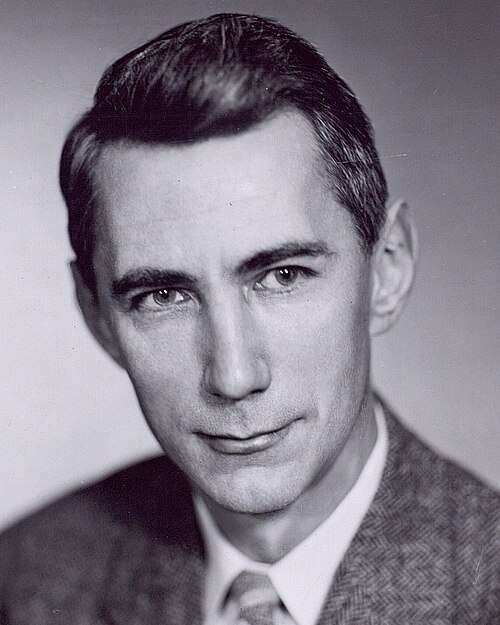

1956: Birth of AI as a Field

1956

The Dartmouth Summer Research Project on Artificial Intelligence is held.

Key figures include:

- John McCarthy

- Marvin Minsky

- Claude Shannon

Significance:

- First mention of Artificial Intelligence

- Established AI as a distinct field

Image source

Image source

Image source

1950s–1990s: Symbolic / Logic-Based AI

Core idea:

Many early AI researchers believed that AI could be achieved using expert curated knowledge and rules, thereby mimicking human logical reasoning.

Characteristics:

- Rule-based systems (if–then rules)

- Formally represent information, knowledge, and rules.

- Strong links to ontologies, taxonomies, and knowledge organization

Well-known examples:

- Early Natural Language Systems (1960s–1970s): ELIZA and SHRDLU

- Expert Systems in Industry (1970s–1980s): MYCIN (a research medical expert system) and XCON/R1

- Japan’s Fifth Generation Computer Systems Project (1982–1992): A national initiative focused on logic programming (e.g., Prolog) and symbolic AI, which failed to meet its goals despite substantial investment.

- IBM Deep Blue (1997): A chess system that defeated world champion Garry Kasparov using symbolic AI.

Image source

Limitations:

- Knowledge acquisition bottleneck: required extensive rules and domain knowledge, which were costly to construct and maintain.

- Difficult to scale beyond small, well-defined domains

- These limitations led to AI winters, when funding cuts and public interest reduced, particularly around 1974–1980 and 1987–2000.

1980s–2010s: Data-Driven AI (Machine Learning and Deep Learning)

Key idea:

Instead of being programmed step by step, machine learning systems learned by examining many examples (data) and identifying patterns in the data.

Early machine learning (1980s–1990s):

- Handwriting recognition

- Speech recognition systems

- Email spam filtering and text classification

Deep learning era (2000s–2010s):

- Larger neural networks trained on massive datasets

- Thanks to increased computing power (e.g., GPUs from Nvidia)

- Breakthroughs in areas such as computer vision, speech recognition, and machine translation.

- Examples include AlphaGo (2016) by DeepMind that defeated world champion Lee Sedol in Go, Advanced driver-assistance systems (e.g., Tesla Autopilot), and AlphaFold by DeepMind for protein structure predictions.

Image source

Image source

Image source

- Advances in deep learning have been linked to Nobel Prize-winning achievements, including Geoffrey Hinton’s 2024 Nobel Prize in Physics for artificial neural networks and Demis Hassabis’s Nobel Prize in Chemistry for protein structure prediction.

Image source

Image source

Limitations:

- Black box: It was often hard to understand or explain systems' decision-making process, even to its designers. Further information can be found in this paper.

- Data dependence: Machine learning systems are only as good as the data they learn from. Biased or low-quality data leads to biased or unreliable results. Here is an example.

2017–Present: Attention is all you need

2017

Based on deep learning and neural networks, a team of researchers at Google published a groundbreaking paper in 2017 titled Attention Is All You Need. This work introduced the Transformer architecture and is widely recognized as a major milestone that enabled the development of modern generative AI.

However, progress was not instant. When the Transformer architecture was first introduced, it did not immediately receive ongoing strategic focus within Google.

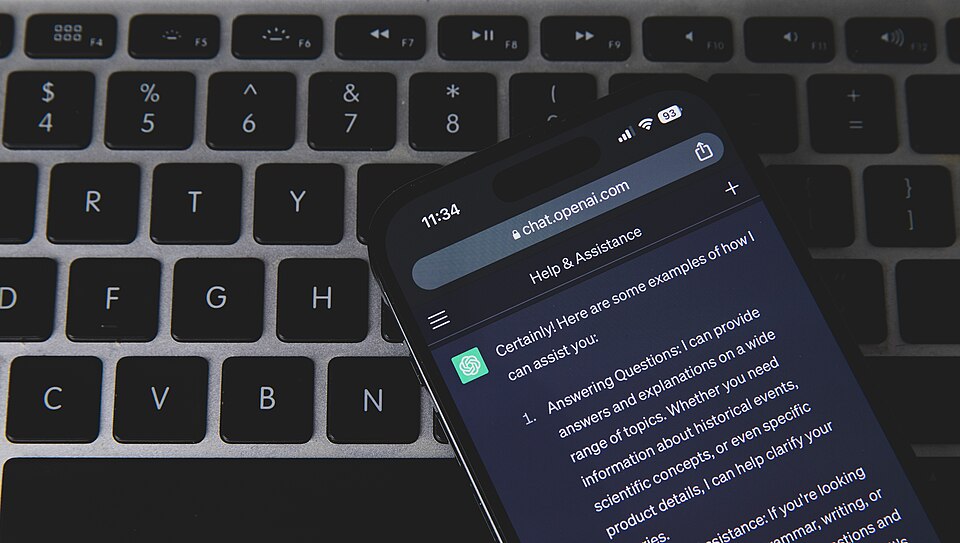

2022–Present

Instead, the newly founded company OpenAI was among the first to successfully deploy large language models based on the Transformer architecture at scale. The release of ChatGPT marked the first instance of a large language model achieving widespread public adoption and commercial success.

Image source

Subsequent examples of large language models include Claude by Anthropic, Gemini by Google, and Grok by xAI.

Large language models demonstrate a range of language-related capabilities, including:

- Text generation

- Summarization

- Translation

- Question answering

- Code generation

Governments have recognized the importance of artificial intelligence development. For example, the United States has recently introduced several initiatives and policies, such as the GENESIS Mission (https://www.whitehouse.gov/presidential-actions/2025/11/launching-the-genesis-mission/).

At the same time, industry has invested heavily in building AI data centers, reflecting ambitious efforts toward achieving artificial general intelligence (AGI).

However, despite their abilities, large language models also have limitations:

- Hallucinations: models may produce incorrect information while presenting it with high confidence

- Dependence on training data quality: biased or low-quality data can lead to unreliable outputs

- Environmental impact: training and deploying large models requires substantial electricity and computing resources

- Copyright concerns: the use of copyrighted materials in training data remains contested